You can have 90% customer satisfaction scores on individual interactions but

have an overall low NPS. Why? They loved the effort you made in supporting

them but not if it took 5 support requests. Customer experience is an art and

a science and the art bit is done by adding vision to the voice of the

customer. Below we'll cover key problems with data analysis and then show you

how to add vision.

What's VOC and how do companies measure it?

Voice of the customer (VOC) is the "process of capturing customer's

expectations, preferences and aversions. Specifically, the Voice of the

Customer is a market research

technique that produces a detailed set of customer wants and needs" via

Wikipedia.

Here's how Hubspot describe

it

"This process captures everything that customers are saying about a business,

product, or service and packages those ideas into an overall perspective of

the brand. Companies use VoC to visualize the gap between customer

expectations and their actual experience with the business."

How do you go about doing this?

Customer interviews, surveys, NPS, live chat, customer reviews, feedback

forms, social media and more.

The underlying problem with data is people post-rationalise

This article

contains

a powerful list of key points on the dangers of surveys including:

-

"When people answer direct questions they attempt to rationalise their

decisions and behaviour" -

"We are prone to making sub-optimal and often irrational decisions. This is

contrary to how we like to perceive ourselves and naturally we don’t

articulate this when answering survey questions" -

"Relative importance of an item is heavily influenced by the ease with

which we can retrieve it from our memory".

Read more here, it's a great

summary.

At Upscope we realised they copied and pasted their answer from our

example

Upscope had a form asking customers:

"Where did you first hear about Upscope? e.g. "Googled co-browsing

services".

Around 5% of the answers said "Googled co-browsing services".

This made the marketing team think "Ok, we should double down on that and

create more ads for that search term as it works".

It turns out that new sign ups were simply copying and pasting "Googled

co-browsing services" or typing that in directly with various spelling

mistakes.

We discovered something both obvious and funny. In some countries people are

not familiar with the abbreviation "e.g." and of course some people just

wanted to get it done and the above was a close approximation of their search

term or not at all.

5 star ratings all the way through but overall a 3 star? Yes

Here's an example by Mckinsey showing the importance of end to end customer

experience. The individual steps were great but the overall score was not.

This is partly why the Intercom co-founder Des Traynor suggested someone in

your company should be signing up to your own service every 2 weeks and going

through the whole process to check on the overall process.

Another example is that, at Upscope, we realised were were sending too many

emails from across several functions but no-one had complained until someone

finally said "the emails were a little intense".

New technology distorts the data further

Google now have automated replies built into gmail.

Let's say you send a very angry customer an email confirming that you've

completed a task for them and rather than typing out a fully reply they simply

select Google's preset "Awesome, thanks!" reply.

Over time you've got 1000s of replies that suggest everything is awesome.

It's definitely not.

"Because if you know customers are having problems, it should be your goal to

get to the root of it. On the flip side, if you know where customers are

finding enjoyment in your product, you should want to find out why so you can

expand on it."

"VoC best practices stress that you ask probing questions and not just set

yourself up to receive positive remarks"

Read more on Gainsight's view on voice of the

customer

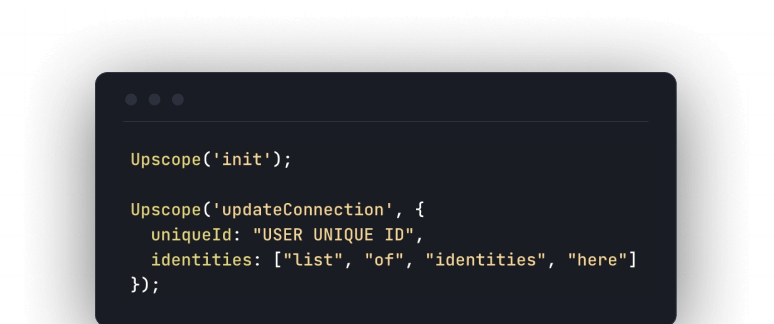

How to add vision on VOC to give context to data

On top of all the visitor analytics, text analytics, social media mood

measuring, surveys and NPS scores you can add screen recordings and one to one

co-browsing sessions.

The visual side is hard.

It's not hard to implement, it's hard to watch the truth.

When you've spent months on redesigning your website flow and then you watch

customers fumble their way through and struggle on parts you never imagined

would cause problems, that's tough.

When your data suggests everything is OK but it turns out they're simply not

bothering to complain then you end up questioning your own strategy.

Add screen recording which has in-built struggle detection

Some companies use screen recording tools like

SessionCam which can be integrated directly into

customer experience tools such a Qualtrics and Medallia.

They can include features like "Struggle detection" which measures when

customers struggle with an interface and records that session for you to view.

This can then be combined with data to give you a new set of assumptions to

test.

Looking at a recording, seeing a problem and making changes is just as bad as

going off just the data. The recording gives us a clue as to what might be

missing in the data. That gives us assumptions to test. It is still data

driven.

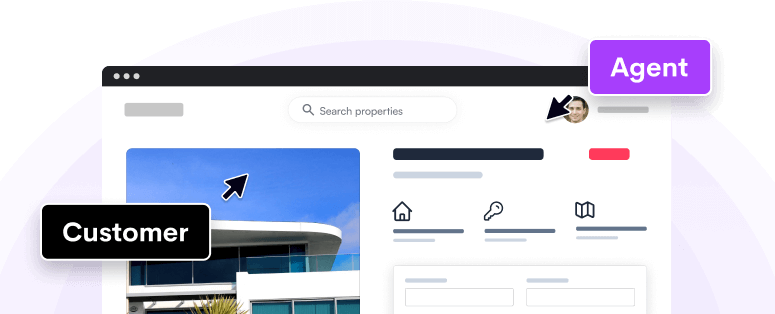

Co-browsing and one to one sessions

Talking to someone while watching them navigate through your website is

painful but rewarding.

Co-browsing is a form of interactive screen sharing

built for onboarding one to one because there no downloads or installs and you

can click and scroll with the customer on their screen.

The difference between this and screen recordings is that you get to ask the

customer what they don't understand and take notes as you go along.

This is often where you'll discover the real reason why they struggled with

part of a form, navigation, what they understood from a set of instructions

given and what's unclear.

Some companies are taking one to one onboarding to an extreme

Imagine Google onboarding every single person, one to one, when they signed up

for a gmail account.

Crazy?

Superhuman are doing it for their email product.

Scroll down to the first point in this

article to read more

about both positioning your product in someone's mind and how it fits with

onboarding them, along with Superhuman's super crazy plan.

Imagine the feedback they get from doing this. Many companies can't do it but

Superhuman are scaling one-to-one sessions and likely have rapid accurate

product iterations.

Read next: 8 Companies Show you their Customer Experience

Strategy